Empowering Underwriters: The Convergence of Human Experience and Technology

Underwriting plays a crucial role in the success of an insurance carrier by assessing and evaluating the risks associated with insuring individuals, businesses, and assets. Despite the significant progress made in digitization and access to prefill and third-party data, there is still ample room for innovation to realize straight-through processing and improve the underwriting process.

Fortunately, integration of data and analytics capabilities into selling, underwriting and servicing customers are becoming mainstream in the insurance industry. The top carriers are investing heavily and leading the pack by building advanced data and analytics underwriting capabilities that deliver substantial value. Leading insurers are improving upon loss ratios, generating healthy new business premiums and driving profitable growth by leveraging data-informed underwriting. We anticipate that carriers will increasingly use the power of data and analytics to proactively assess their portfolio underwriting practices—similar to what hedge funds do in predicting capital markets—and identify market opportunities ahead of competition.

This article explores the power and promise of data-informed underwriting, examining the unique challenges that underwriters face and the latest data and analytics capabilities and innovations that insurers must adopt to fully transform the underwriting process and cater to the ever-changing needs of their customers. Join us on this journey to discover how these advancements are poised to disrupt the industry and create a brighter future for insurance underwriting.

Underwriting Challenges Still Prevailing In The Industry

In recent years, significant advancements in the use of third-party data and analytics models in underwriting have transformed the way insurers approach the process. Utilizing advanced analytics, insurers have been able to analyze vast amounts of data and incorporate a more insight-driven approach to assess and evaluate risks.

Despite the notable advancements, challenges persist in the underwriting process, such as outdated technology, poor data quality, and a lack of inline analytics and models to augment underwriting decisions. Addressing these challenges is crucial to improving the accuracy and efficiency of underwriting and meeting the evolving needs of producers and policyholders.

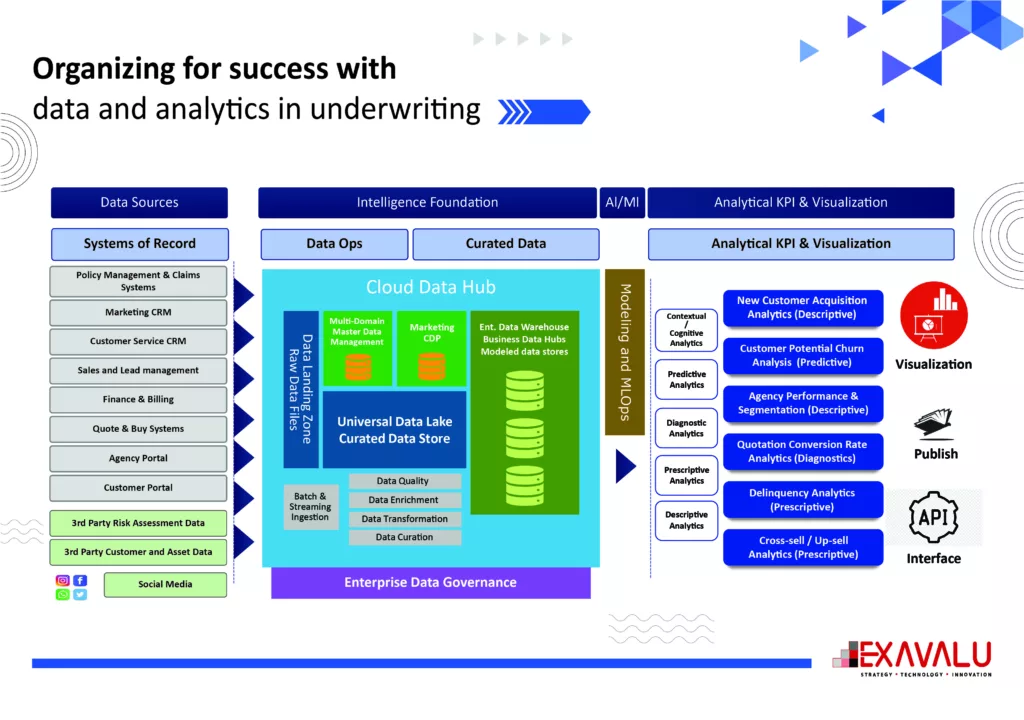

Organizing for success with data and analytics in underwriting

Diverse internal and external data sources are available to serve as fuel for a new underwriting engine, and inline artificial intelligence–based models may unlock valuable new insights. Data is one of the most valuable assets insurers have, and predictive analytics has been helping businesses make the most of that data. However, most carriers are behind in their use of machine learning and cloud enabled analytics platforms that leverage modern data science and machine learning platforms. From anticipating customer behavior to supporting underwriting straight-through processing, use of AI and Analytics have been enabling leading carriers who have seized the opportunity to leverage cloud and Machine Learning Operations (MLOps) to enable data-informed operations.

- Data Acquisition

High underwriting expenses often stem from the efforts associated with obtaining customer and risk information through back-and-forth communication involving producers, customers, investigators, and third parties, among others. Streamlining data collection from multiple digital sources including internal enterprise systems and external data providers through pre-fill will improve the decision-making accuracy and bring down expenses drastically.

For example, various Client interaction data can be gathered from multiple CRM systems, Loyalty management systems, Contract center systems etc., while Policy and claims related data can be gathered from Policy and Claim centers systems, Premium payments can be collected from billing systems etc. Insurers may also utilize external systems to gather relevant data for new business or renewals. For instance, environmental risks can be evaluated from satellite or drone imagery overlayed with weather events, such as floods. Meanwhile, internal operational risks can be assessed through data sharing on machinery breakdowns, accidents, and other unforeseen events.

- Data Management system

Inaccurate or incomplete data caused by the lack of proper data management processes can lead to serious underwriting errors. This in turn negatively impacts Carrier’s growth, profitability and reputation.

To prevent this from happening, carrier’s data strategy must include diverse ways of obtaining and securing access to internal data, and ways to combine this with internal sources to formulate insights and models that informs carrier’s underwriting practices. The starting point of this transformation needs to be the improvement of the data assimilation process through application of a Data Fabric capability to rationalize, unify, and enhance sources of data. The ability to identify valuable data sources and streamline the data collection will eliminate the otherwise costly affair of going back and forth between different stakeholders to obtain customer and risk-related information.

To address these challenges, insurers must adopt effective data management techniques and systems, such as data ingestion, transformation, and integration capability, and usage of centralized data stores like Data Lake and Data Warehousing systems. Also, the adoption of data quality management, Data Governance, and Master data management plays a key role empowering experimentation and model development at scale. A cloud based big data capability to ingest, manage and govern enterprise data in cloud-based data lakes can be a right fit solutions for today’s enterprise. Data lakes serve as massive storage reservoirs that house vast quantities of raw data in their native form, encompassing unstructured, semi-structured, and structured data types. The data structure and requirements remain undefined until the data is needed. Together, these systems enable the carrier to provide data where it is needed, from inline insights for decision support to descriptive, predictive and prescriptive analytics.

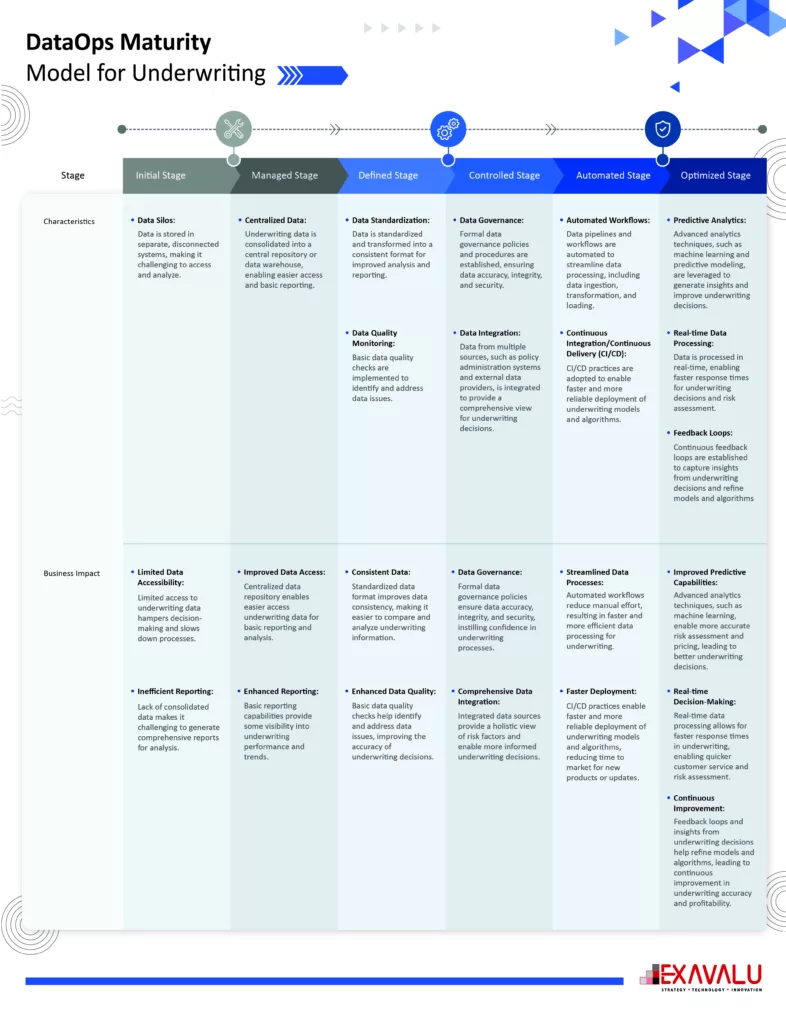

- Data Governance and DataOps capability

To help utilize the raw data that arrives from sources in a data lake, organizations use a governed data lake that houses structured and unstructured data and also create trusted, secured and governed data layers for consumptions. Managed data lakes provide possibilities to discover, comprehend, exchange, and confidently take action based on that information. Data without context lacks meaning. Data governance is a principle that ensures data is secure, private, accurate, recent, and usable. It allows setting up internal standards—data policies—that govern how data is gathered, stored, processed, and disposed of. It determines the access permissions for different types of data and establishes governance over which data falls under its purview. This certifies that the enterprise data is fit for consumption for Analytical and business decision support.

Inspired by the DevOps concept, the DataOps strategy strives to speed the production of applications running on big data processing frameworks. The objective is to guarantee that an organization’s data is utilized in the most adaptable and efficient way to achieve favorable and dependable business results. DataOps spans a number of technology disciplines such as data extraction, data ingestion & transformation, data quality, data governance, access control, data center capacity planning and system operations. DataOps-enabled tools foster teamwork, coordination, data integrity, security, accessibility, and user-friendliness.

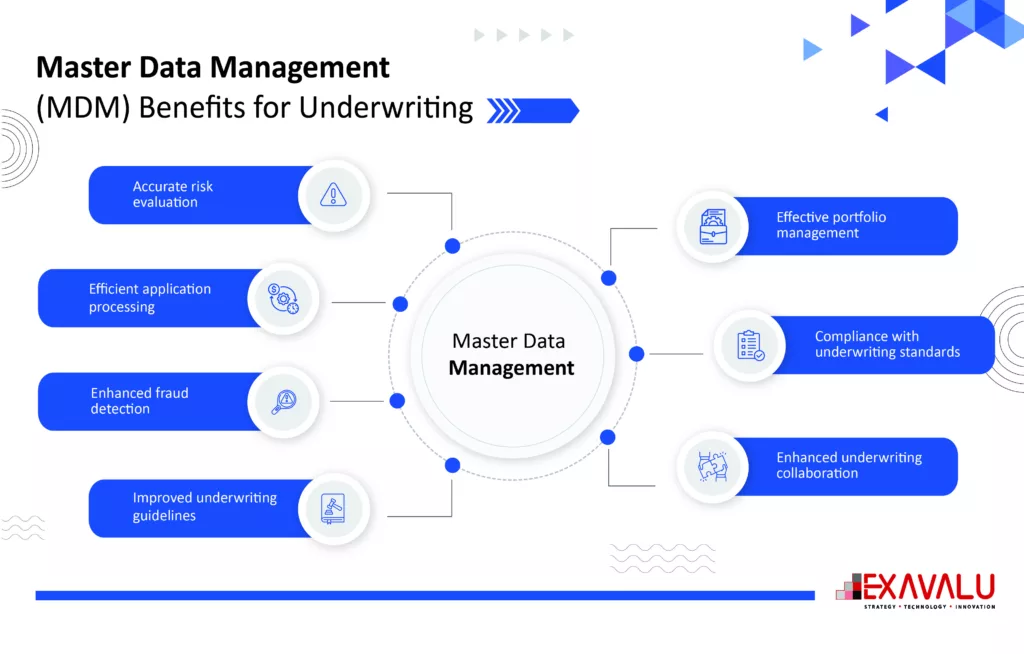

- Adopt Master Data Management (MDM) Solution

MDM is the process of creating and maintaining a single, authoritative source of Master data for an organization. Insurers should deploy multi-Domain MDM system to ensure accurate and unique Customer, Agent, Insured Assets and Claims master data is available for further consumption. Customer data is spread across multiple enterprise systems today, prohibiting Insures to obtain a Unified View. MDM systems provide the ability to Consolidate, Standardize and Uniquely Identify key Master data and their relationship like Customers, Assets, Claims or Agents for further downstream systems to consume. The unique Gold copy data is then utilized by Enterprise systems to streamline its operations such as point-of-sale cross sell, customer services, claims intake and analytical use cases.

The Multi-Domain MDM system also adds the capability to group individual policies and accounts within a household or family that allow Insures to identify patterns and trends that are not otherwise visible at the individual policy level while also serving customer preferences for omni-channel communication, billing and notices. Data privacy and security can also be addressed. Improving customer insights through a deeper understanding of their behaviors, preferences, and needs can help underwriter to make more informed decisions, offer more targeted and personalized products and services, reduce risk, and enhance customer satisfaction.

- Establish Best-fit Enterprise Data model to enable Analytics!

Data holds immense value for insurance companies, and leveraging analytics can unlock significant business benefits. However, harnessing the full potential of data requires robust data modeling capabilities. An Enterprise Data Model offers a comprehensive perspective on the data generated and used within an organization. It provides a unified and unbiased representation of data, and their interrelationships, independent of specific source systems or applications. The model presents a holistic view of the data relevant to the business and the governing rules associated with them.

Analytics is generated from data that is already organized through data processing as defined in the data model. There are several categories of Analytics, one such category is Descriptive Analytics which is generated from organized modelled data in data warehouse or data marts that supports everyday Business intelligence reporting and decision making. There are other categories of Analytics like Predictive or Prescriptive analytics that is generated from past or real time data (structured or unstructured) through Machine learning algorithms to generate additional business insights. Embedded Analytics is also gaining popularity which is generated while ingesting real time streaming data. This can be used to trigger real-time campaigns or next best offers for the target customers.

- Artificial Intelligence (AI), Machine Learning (ML) and MLOps

At its core, AI/ML utilizes algorithms and statistical models to enable machines to learn and make decisions on their own without human intervention or augment human judgement with additional insights for better decision making. Analytics, Artificial Intelligence and Machine learning Solutions thrive on Data. In other words, modern data eco-systems that generate quality enterprise data can improve effectiveness of AI and Analytics solutions. It helps identify patterns and anomalies in vast amounts of data, providing valuable insights that may be missed by human analysts.

Though ML technology is being applied to solve a variety of issues with great success, most data scientists hit bottlenecks while deploying ML model. Research shows that lack of structure and formalized processes around ML lifecycle is responsible for that. This is where MLOps comes into play. Inspired by the DevOps concept, MLOps offers a collection of practices that establish a structured and segmented approach to deploying, monitoring, and retraining machine learning models. It helps to improve the quality of production models, while incorporating business and regulatory requirements and model governance. It provides a framework for managing the ML lifecycle effectively by matching business expertise with technical knowhow, through iterative workflows. Machine learning is a relatively young field, and regulatory bodies consistently modify their requirements and revise their guidelines. MLOps takes ownership of staying in compliance with shifting regulations, as prevalent in the financial service industry.

- Cloud Computing trends in Insurance

Cloud Computing and Big data Analytics in Insurance is rapidly becoming mainstream as it has realized the benefit over the last few years. The Insurance sector is highly competitive and regulated, and the carriers need to quickly react to the changes in the market by offering new services and products.

New capabilities, business features, and products can be more quickly developed, tested, and launched in a cloud than in traditional environments. This advantage is especially beneficial for those who are quick to adapt, as they can swiftly respond to market changes. Cloud technologies and tools enable better asset utilization and more-flexible operating models. Advanced cloud capabilities allow companies to generate insights that previously demanded intensive resources to develop. To achieve successful cloud transformations, it’s crucial to select and train employees to consider operational expenses when evaluating cloud costs. Collaborating with teams to foster innovation and cultivating cloud champions are vital in helping the entire organization grasp the business advantages of the cloud.

How To Start Optimizing Underwriting For Success:

Progressive underwriting organizations are empowered by Predictive Analytics, Artificial intelligence and digital capabilities that allows them to scale, improve sales and be profitable. These Underwriters have significant leverage through interactive tools and data-driven insights, allowing them to handle substantially larger books of business with more precision and control. They are able to use data throughout the underwriting process to inform underwriter decisions in prioritization of prospects, identification of risks, policy structuring, and pricing. They can get full control of continuously evolving risk models that incorporate ever-expanding views of risk characteristics, tailored by line, segment, and emerging-loss trends.

The success of an underwriting platform will depend on real-time availability of relevant data from the ecosystem, scalability of the platform to access new data sources, and maturity of risk-monitoring models to generate relevant insights for underwriters. Data can be collected from telematics, agent interactions, customer interactions, smart homes, satellite imagery, hazard databases and social media to better understand and manage their relationships, claims, and underwriting. This data can be utilized to build next-generation analytical solutions that augment underwriting decisions in real time to prevent material losses and promote straight-through process efficiencies.

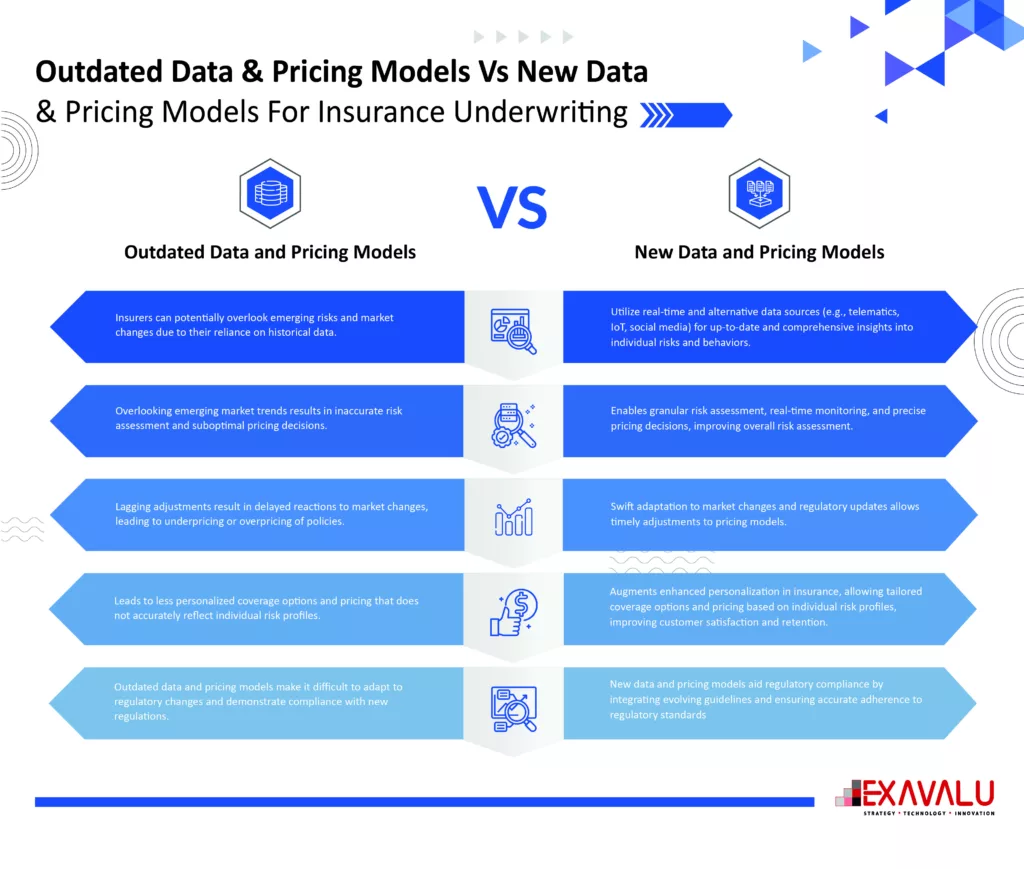

- Overreliance On Outdated Data & Pricing Models

The traditional underwriting processes rely on manual work and outdated data management capabilities, which leads to time-consuming and inefficient practices. Moreover, the industry’s overreliance on outdated data and pricing models that don’t reflect current market conditions, or the unique risks associated with specific policyholders or assets accurately can result in underwriting errors that lead to policyholders being underinsured or over insured, negatively impacting policyholder experience.

Thus, insurance carriers must adopt new risk models that incorporate a broader range of data sources, including real-time data and advanced analytics, to improve their risk assessments and pricing decisions. They largely utilize data directly from sources that are current and relevant. Data and feedback collected from social media, smart devices, weather data and interactions between claims specialists and customers is straight from the sources.

- Predicting Customers At Risk

Predictive analytics can help carriers to identify customers who are at risk of cancelling their policy or not paying their premiums. More advanced data insights from contact center data or other third-party data sources may help insurers identify customers who are likely to churn. Having this knowledge will give carriers an advantage, enabling them to proactively address potential issues and provide personalized attention. Without predictive analytics, insurers risk overlooking important warning signs and wasting valuable time resolving problems.

- Identifying Risk of Fraud

Despite battling various instances of fraud, insurers often remain unsuccessful. The Coalition of Insurance Fraud estimates that $80 billion is lost annually from fraudulent claims in the United States alone. Additionally, fraud makes up 5-10% of claims costs for insurers in North America.

With predictive analytics, carriers can proactively detect and prevent fraud or take corrective action afterward. Insurers often utilize social media to uncover signs of fraudulent behavior by monitoring insured individuals’ online activities after a claim is resolved. Insurers are also relying on predictive modeling for fraud detection. “Where humans fail, big data and predictive modeling can identify mismatches between the insured party, third parties involved in the claim (e.g. repair shops) and even the insured party’s social medial accounts and online activity,”.

By continuously collecting and analyzing data, risk categories can be swiftly reclassified based on newly available information. This alters the probability and impact of risk factors in the current underwriting model. With real-time assessment of dynamic economic scenarios, timely recommendations for risk mitigation can be communicated to customers for necessary corrective action.

- Predicting Future Claims

Insurers can identify claims that may become unexpectedly high-cost losses in the future. By employing effective predictive analytics, P&C insurers can examine past claims for similarities and proactively notify claims specialists. This early alert system allows insurers to reduce outlier claims by anticipating potential losses or related complications. Moreover, insurers can proactively utilize insights gained from outlier claim data to develop strategies for handling similar claims in the future.

Advancements in analytics have also helped transforming the claims process. A systematic approach makes it possible to propose risk mitigation measures and convert certain risks into acceptable categories. It can help insurers avoid unfavorable risks in certain cases.

- 360-degree view of Customers

Insures can obtain a 360-degree view of customers by aggregating data from the policy systems and other touch point systems that a customer uses to contact the company to purchase products and receive services and support. The Master data Management solution creates a single view of customers, agents and assets and their relevant data attributes and relationships into the data management system. Customer data is utilized to accurately generate new insights that provide a more complete picture of a customer, like their contact preferences, relationship with the company, house-holding, cross sell opportunities, buying propensity, risk profile and others.

Customers today value a customized experience. Creating a foundation to know your customers and their relationships with the carrier enables opportunities to serve them better. This will provide a competitive advantage that saves carriers time, money, and resources, while helping customers feel connected to the carrier’s product, service and claim experience.

Case Study: Enhancing Underwriting For Large Worker’s Compensation Insurers With Improved Scoring Engine

When a large US-based worker’s compensation insurer wanted to enhance its underwriting operation, it partnered with Exavalu for expert consulting and implementation services. Our team leveraged advanced analytics and machine learning to deliver a custom scoring engine, accelerating the underwriting decision-making process for our client.

Riddled with the challenges of manual risk assessment and score generation process, the USA-based worker’s compensation insurer reached out to Exavalu for a new automated scoring engine that will-

- Eliminate manual risk assessment processes,

- Accelerate underwriting risk score generation with improved accuracy,

Our client also needed the risk scoring engine to be integrated with Guidewire PolicyCenter, for a real-time risk generation process.

Our Response To Client’s Requirements

Exavalu leveraged our deep insurance domain and Guidewire integration expertise to help our client transform the risk assessment and score generation process for underwriting. We started by integrating Guidewire PolicyCenter and other sub-systems with cloud-based models developed using Python and Experian integration. Our team also developed APIs using MuleSoft to automate the underwriter risk assessment and implemented a reusable caching mechanism for third-party information retrieval and cost reduction.

Successful Implementation of A Real-time Scoring Engine Boosts Underwriting Efficiency And Risk Management For Client

Through our innovative approach, we were able to fulfill our client’s requirements and significantly enhance their straight-through processing and risk avoidance capabilities. The implementation of our new scoring engine greatly improved the response time for risk assessment and score generation to just 2-3 seconds, resulting in a much more efficient and effective risk management system. Our solution also resulted in a higher availability of their risk-scoring solution and operational dashboard, which effectively improved their business visibility.

Conclusion

The insurance industry is on the cusp of a major transformation, thanks to the integration of advanced Analytics, enablement of a data fabric and abundance of internal and external data for analysis and model development to support the underwriting process. This shift presents a unique opportunity for insurers to leverage cutting-edge solutions and optimize data management practices to enhance risk assessment capabilities, streamline underwriting operations, improve conversions and cross sell, and provide more personalized coverage to customers. By embracing innovative technologies such as machine learning, artificial intelligence, and predictive modeling, insurers can gain a competitive edge in a crowded marketplace while improving operational efficiency and reducing costs. As the insurance landscape continues to evolve, those who are quick to adopt these transformative solutions will be the ones who thrive in the future of insurance.